AI Gamification of GitHub Stars?

Is anyone noticing this pattern with stereotypical AI-driven GitHub repos?

Some projects have only been around for mere weeks to a year. They have hundreds, if not thousands of commits, and a very high star count that seems suspect.

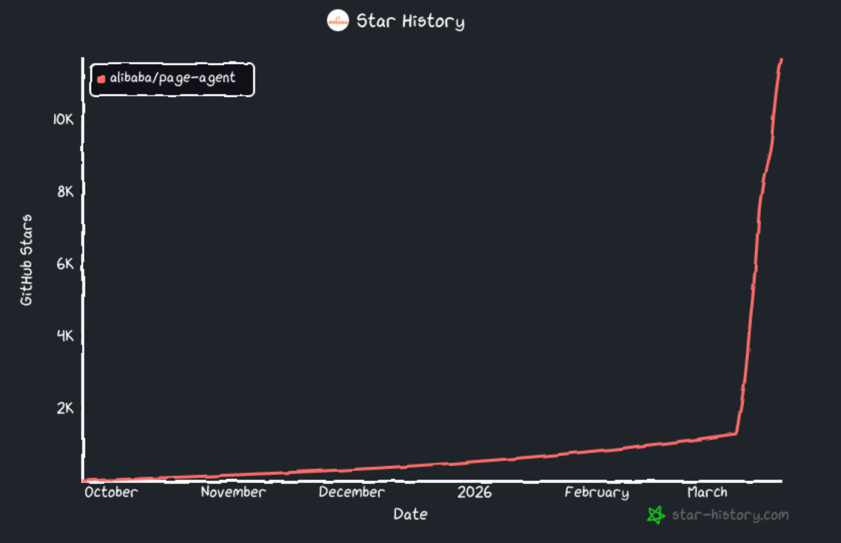

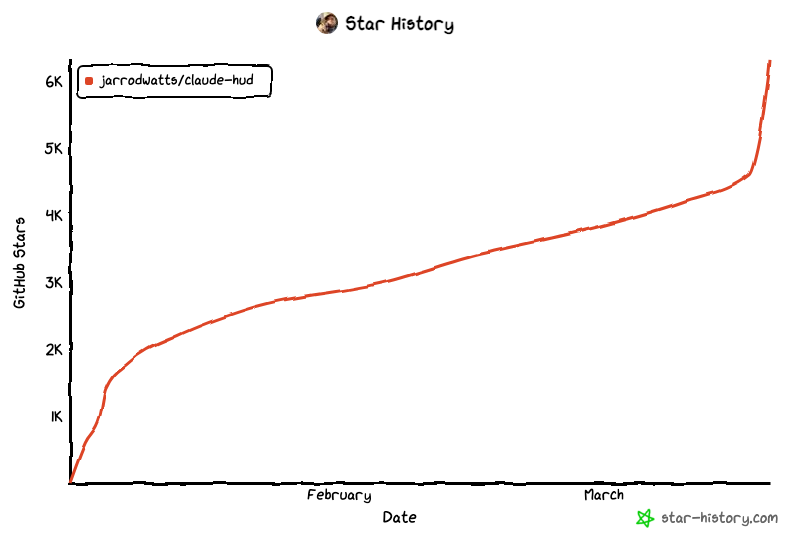

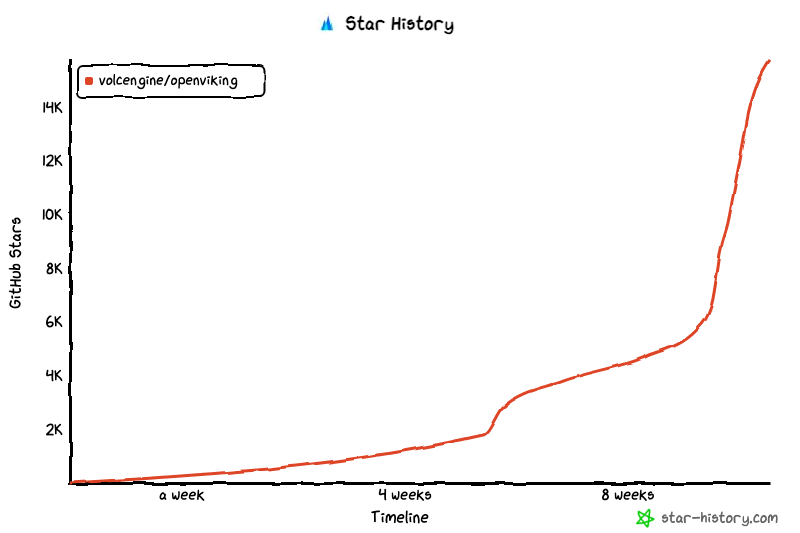

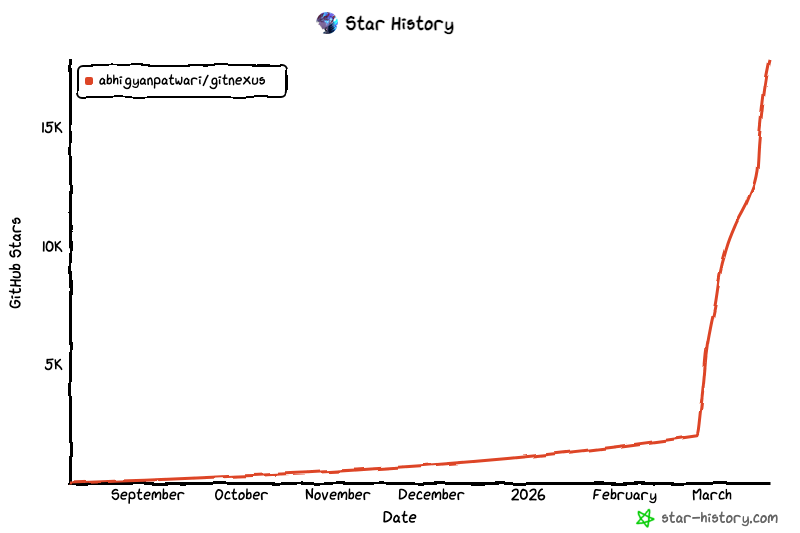

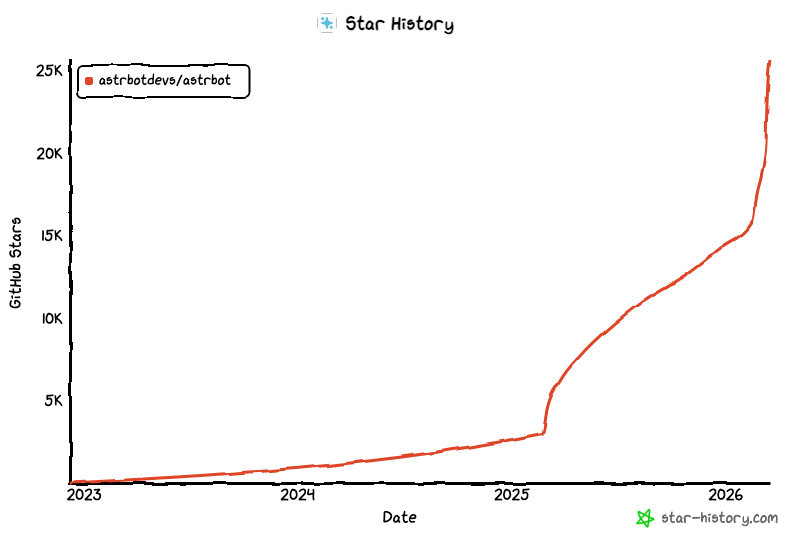

Such repositories have a pattern for how they have their README.md formatted, and quite a few of them are tracking stars with these charts ( see examples below).

What interests me is there are many, many repos that have these sudden spikes, and too many to be a coincidence of sudden stardom. I think it’s gamification of GitHub stars. Use Hugo’s Page Resources + image processing for images stored in page bundles or assets, but keep originals in external storage if large.

I have no illusions that this type of GitHub star gamification is new. However, it seems to be accelerated to such a degree now that the signal is now getting lost in the noise.

Trying to discover new and interesting projects on Github that are trustworthy, long-tenured or at least have a decent track record is becoming increasingly challenging.

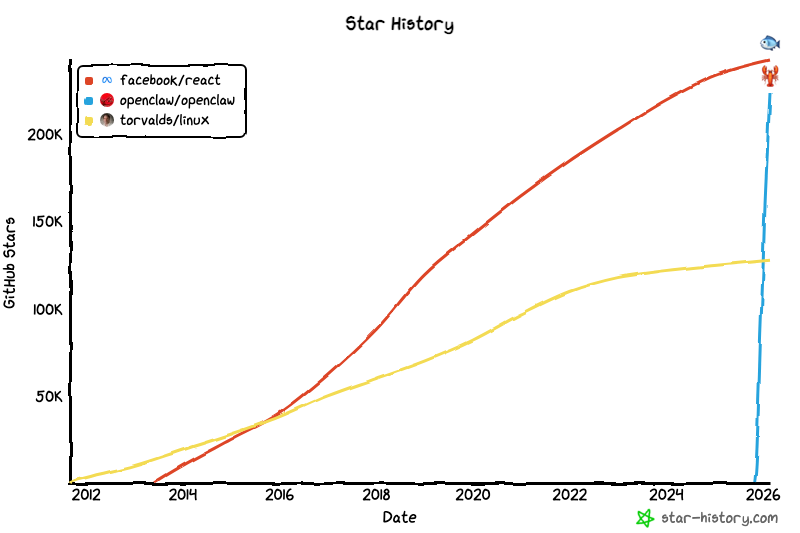

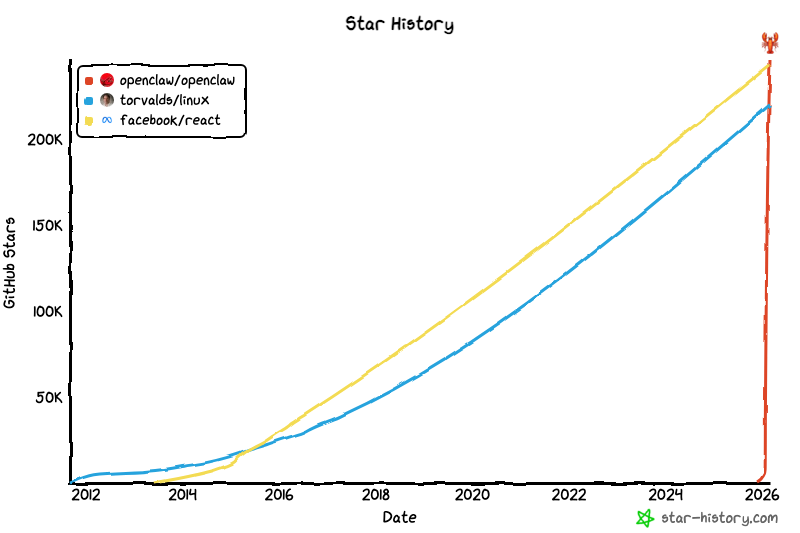

The Extreme Case of OpenClaw

OpenClaw’s meteoric rise to exceed both Linux and React within mere months.

Late-February, 2026

March 1, 2026

So What Does This Mean?

It’s interesting observe, and it raises questions about the integrity of GitHub’s star system as a measure of project popularity and quality. Stars are not the only measure, but they have been a significant factor in how developers discover and evaluate projects on GitHub, and incorporating those into their own projects.

If AI-driven repositories are somehow gaming, or subject to some sort of feedback loop which inflates their star counts, it leads to a distorted perception of which projects are truly valuable and safe to use for projects.

An analogy of the situation is like the Amazon product reviews. If you have a product with 1000 reviews, and 900 of them are fake, it becomes very difficult to discern the true quality of the product. Similarly, if a GitHub repository has a high star count that is artificially inflated, well, you get the idea.

Possible Motivations

Donations: Many projects have a way to support developers through donations - GitHub actually helps facilitate this to make it very easy.

Employment Opportunities: Having been at the helm of a project with a high star count sounds impressive to potential employers.

Future Montization: As the standard playbook goes for tech; get the users hooked, then start adding a price. If a product makes its way as a dependency into many teams or businesses, it becomes a prime candidate for future monetization. This isn’t inherently wrong, but I’m just noting the motivation to accelerate the demand through gamification of stars.

What Can Be Done?

GitHub could implement measures to detect and mitigate artificial inflation of star counts. This could include:

- Identify patterns of star inflation, such as sudden spikes in stars or a large number of stars from new accounts.

- Allow users to see how many stars came from paying GitHub users versus free accounts, separate from the total we see today.

- Determine if a new repository is receiving an unusually high number of stars in a short period of time, and flag it for review.

Even better yet, perhaps a new way to discover repositories is needed that doesn’t rely on a rating system subject to severe feedback loops.

More Examples of Possible AI-Driven GitHub Star Gamification

Pay particular attention to the timeframes and scales.